-

admin wrote a new post 1 month ago

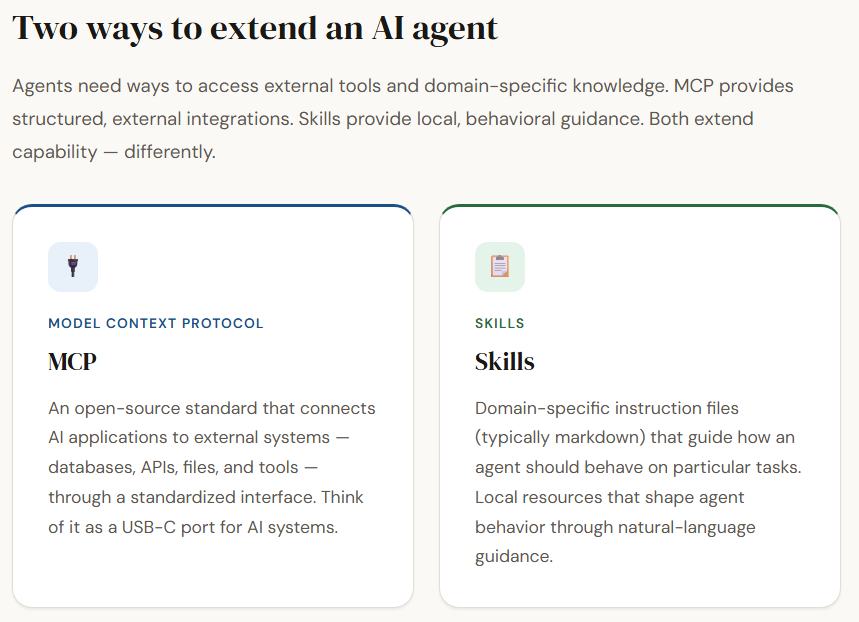

A Beginner’s Guide to Building Autonomous AI Agents with MaxClawMost AI tools forget you as soon as you close the browser window. The system […]

-

admin wrote a new post 1 month ago

Why physical AI is becoming manufacturing’s next advantageFor decades, manufacturers have pursued automation to drive efficiency, reduce costs, […]

-

admin wrote a new post 1 month ago

-

admin wrote a new post 1 month ago

-

admin wrote a new post 1 month ago

The Download: how AI is used for military targeting, and the Pentagon’s war on ClaudeThis is today’s edition of The Download, our weekday n […]

-

admin wrote a new post 1 month ago

Future AI chips could be built on glassHuman-made glass is thousands of years old. But it’s now poised to find its way into the AI chips used i […]

-

admin wrote a new post 1 month ago

AI vs Generative AI: Key Differences, Models, and Real-World UsesTools like ChatGPT, Gemini, and Claude pushed AI into everyday conversations. […]

-

admin wrote a new post 1 month ago

Anthropic Says AI is Not “Killing Jobs”, Shares New Way to Measure AI Job ImpactThis is not another of those ‘AI is killing jobs’ reports. Ant […]

-

admin wrote a new post 1 month ago

Protecting cities with AI-driven flash flood forecastingClimate & Sustainability

-

admin wrote a new post 1 month ago

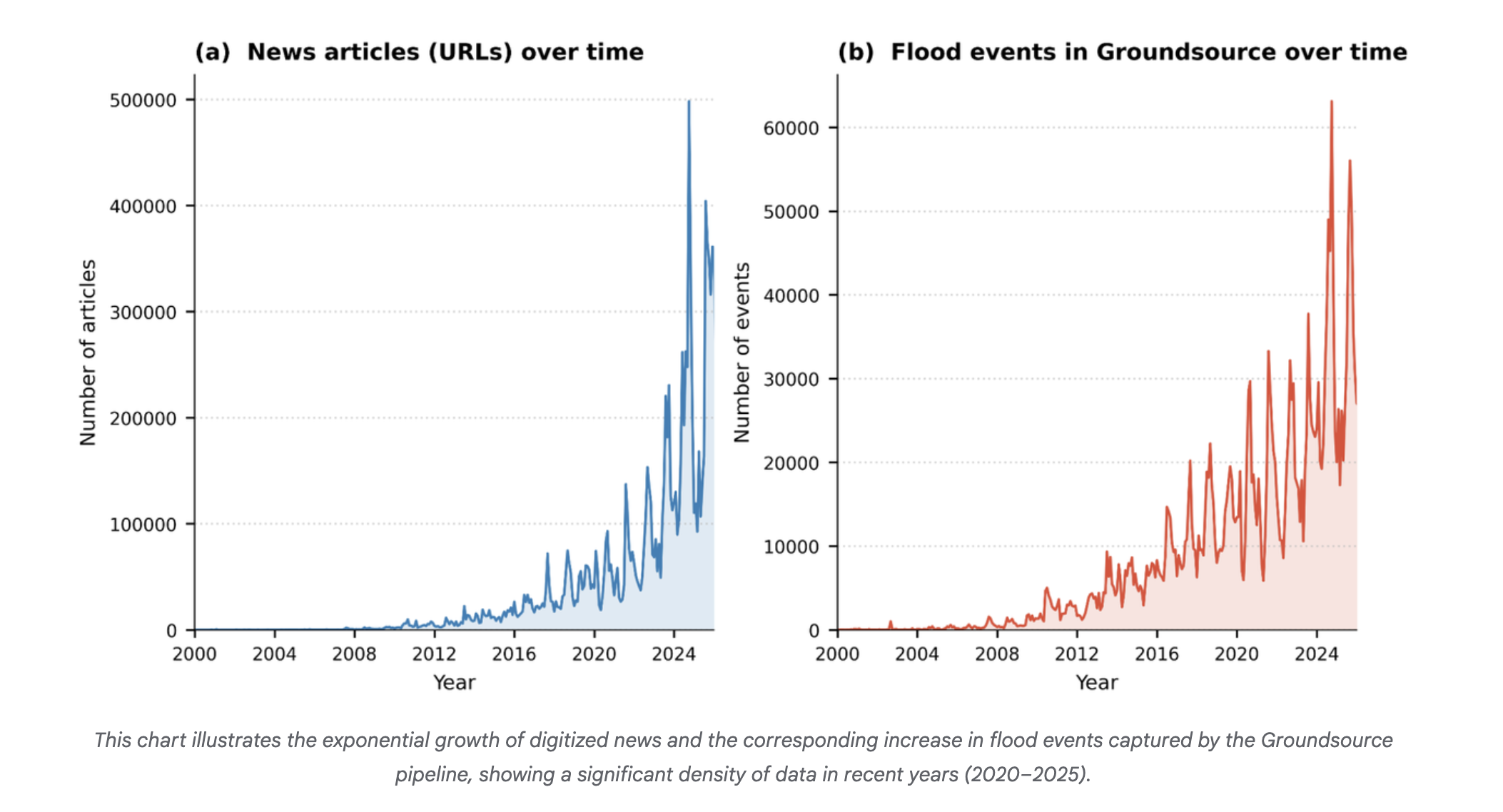

Introducing Groundsource: Turning news reports into data with GeminiClimate & Sustainability

-

admin wrote a new post 1 month ago

How to Build an Autonomous Machine Learning Research Loop in Google Colab Using Andrej Karpathy’s AutoResearch Framework for Hyperparameter Discovery and Experiment TrackingIn this tutorial, we implement a Colab-ready version of the AutoResearch framework originally proposed by Andrej Karpathy. We build an automated experimentation pipeline that clones the AutoResearch repository, prepares a lightweight training environment, and runs a baseline experiment to establish initial performance metrics. We then create an automated research loop that programmatically edits the hyperparameters in train.py, runs new training iterations, evaluates the resulting model using the validation bits-per-byte metric, and logs every experiment in a structured results table. By running this workflow in Google Colab, we demonstrate how we can reproduce the core idea of autonomous machine learning research: iteratively modifying training configurations, evaluating performance, and preserving the best configurations, without requiring specialized hardware or complex infrastructure. Copy CodeCopiedUse a different Browserimport os, sys, subprocess, json, re, random, shutil, time from pathlib import Path def pip_install(pkg): subprocess.check_call([sys.executable, “-m”, “pip”, “install”, “-q”, pkg]) for pkg in [ “numpy”,”pandas”,”pyarrow”,”requests”, “rustbpe”,”tiktoken”,”openai” ]: try: __import__(pkg) except: pip_install(pkg) import pandas as pd if not Path(“autoresearch”).exists(): subprocess.run([“git”,”clone”,”https://github.com/karpathy/autoresearch.git”]) os.chdir(“autoresearch”) OPENAI_API_KEY=None try: from google.colab import userdata OPENAI_API_KEY = userdata.get(“OPENAI_API_KEY”) except: OPENAI_API_KEY=os.environ.get(“OPENAI_API_KEY”) if OPENAI_API_KEY: os.environ[“OPENAI_API_KEY”]=OPENAI_API_KEY We begin by importing the core Python libraries required for the automated research workflow. We install all necessary dependencies and clone the autoresearch repository directly from GitHub, ensuring the environment includes the original training framework. We also configure access to the OpenAI API key, if available, allowing the system to optionally support LLM-assisted experimentation later in the pipeline. Copy CodeCopiedUse a different Browserprepare_path=Path(“prepare.py”) train_path=Path(“train.py”) program_path=Path(“program.md”) prepare_text=prepare_path.read_text() train_text=train_path.read_text() prepare_text=re.sub(r”MAX_SEQ_LEN = d+”,”MAX_SEQ_LEN = 512″,prepare_text) prepare_text=re.sub(r”TIME_BUDGET = d+”,”TIME_BUDGET = 120″,prepare_text) prepare_text=re.sub(r”EVAL_TOKENS = .*”,”EVAL_TOKENS = 4 * 65536″,prepare_text) train_text=re.sub(r”DEPTH = d+”,”DEPTH = 4″,train_text) train_text=re.sub(r”DEVICE_BATCH_SIZE = d+”,”DEVICE_BATCH_SIZE = 16″,train_text) train_text=re.sub(r”TOTAL_BATCH_SIZE = .*”,”TOTAL_BATCH_SIZE = 2**17″,train_text) train_text=re.sub(r’WINDOW_PATTERN = “SSSL”‘,’WINDOW_PATTERN = “L”‘,train_text) prepare_path.write_text(prepare_text) train_path.write_text(train_text) program_path.write_text(“”” Goal: Run autonomous research loop on Google Colab. Rules: Only modify train.py hyperparameters. Metric: Lower val_bpb is better. “””) subprocess.run([“python”,”prepare.py”,”–num-shards”,”4″,”–download-workers”,”2″]) We modify key configuration parameters inside the repository to make the training workflow compatible with Google Colab hardware. We reduce the context length, training time budget, and evaluation token counts so the experiments run within limited GPU resources. After applying these patches, we prepare the dataset shards required for training so that the model can immediately begin experiments. Copy CodeCopiedUse a different Browsersubprocess.run(“python train.py > baseline.log 2>&1″,shell=True) def parse_run_log(log_path): text=Path(log_path).read_text(errors=”ignore”) def find(p): m=re.search(p,text,re.MULTILINE) return float(m.group(1)) if m else None return { “val_bpb”:find(r”^val_bpb:s*([0-9.]+)”), “training_seconds”:find(r”^training_seconds:s*([0-9.]+)”), “peak_vram_mb”:find(r”^peak_vram_mb:s*([0-9.]+)”), “num_steps”:find(r”^num_steps:s*([0-9.]+)”) } baseline=parse_run_log(“baseline.log”) results_path=Path(“results.tsv”) rows=[{ “commit”:”baseline”, “val_bpb”:baseline[“val_bpb”] if baseline[“val_bpb”] else 0, “memory_gb”:round((baseline[“peak_vram_mb”] or 0)/1024,1), “status”:”keep”, “description”:”baseline” }] pd.DataFrame(rows).to_csv(results_path,sep=”t”,index=False) print(“Baseline:”,baseline) We execute the baseline training run to establish an initial performance reference for the model. We implement a log-parsing function that extracts key training metrics, including validation bits-per-byte, training time, GPU memory usage, and optimization steps. We then store these baseline results in a structured experiment table so that all future experiments can be compared against this starting configuration. Copy CodeCopiedUse a different BrowserTRAIN_FILE=Path(“train.py”) BACKUP_FILE=Path(“train.base.py”) if not BACKUP_FILE.exists(): shutil.copy2(TRAIN_FILE,BACKUP_FILE) HP_KEYS=[ “WINDOW_PATTERN”, “TOTAL_BATCH_SIZE”, “EMBEDDING_LR”, “UNEMBEDDING_LR”, “MATRIX_LR”, “SCALAR_LR”, “WEIGHT_DECAY”, “ADAM_BETAS”, “WARMUP_RATIO”, “WARMDOWN_RATIO”, “FINAL_LR_FRAC”, “DEPTH”, “DEVICE_BATCH_SIZE” ] def read_text(path): return Path(path).read_text() def write_text(path,text): Path(path).write_text(text) def extract_hparams(text): vals={} for k in HP_KEYS: m=re.search(rf”^{k}s*=s*(.+?)$”,text,re.MULTILINE) if m: vals[k]=m.group(1).strip() return vals def set_hparam(text,key,value): return re.sub(rf”^{key}s*=.*$”,f”{key} = {value}”,text,flags=re.MULTILINE) base_text=read_text(BACKUP_FILE) base_hparams=extract_hparams(base_text) SEARCH_SPACE={ “WINDOW_PATTERN”:[‘”L”‘,'”SSSL”‘], “TOTAL_BATCH_SIZE”:[“2**16″,”2**17″,”2**18”], “EMBEDDING_LR”:[“0.2″,”0.4″,”0.6”], “MATRIX_LR”:[“0.01″,”0.02″,”0.04”], “SCALAR_LR”:[“0.3″,”0.5″,”0.7”], “WEIGHT_DECAY”:[“0.05″,”0.1″,”0.2”], “ADAM_BETAS”:[“(0.8,0.95)”,”(0.9,0.95)”], “WARMUP_RATIO”:[“0.0″,”0.05″,”0.1”], “WARMDOWN_RATIO”:[“0.3″,”0.5″,”0.7”], “FINAL_LR_FRAC”:[“0.0″,”0.05”], “DEPTH”:[“3″,”4″,”5″,”6”], “DEVICE_BATCH_SIZE”:[“8″,”12″,”16″,”24″] } def sample_candidate(): keys=random.sample(list(SEARCH_SPACE.keys()),random.choice([2,3,4])) cand=dict(base_hparams) changes={} for k in keys: cand[k]=random.choice(SEARCH_SPACE[k]) changes[k]=cand[k] return cand,changes def apply_hparams(candidate): text=read_text(BACKUP_FILE) for k,v in candidate.items(): text=set_hparam(text,k,v) write_text(TRAIN_FILE,text) def run_experiment(tag): log=f”{tag}.log” subprocess.run(f”python train.py > {log} 2>&1″,shell=True) metrics=parse_run_log(log) metrics[“log”]=log return metrics We build the core utilities that enable automated hyperparameter experimentation. We extract the hyperparameters from train.py, define the searchable parameter space, and implement functions that can programmatically edit these values. We also create mechanisms to generate candidate configurations, apply them to the training script, and run experiments while recording their outputs. Copy CodeCopiedUse a different BrowserN_EXPERIMENTS=3 df=pd.read_csv(results_path,sep=”t”) best=df[“val_bpb”].replace(0,999).min() for i in range(N_EXPERIMENTS): tag=f”exp_{i+1}” candidate,changes=sample_candidate() apply_hparams(candidate) metrics=run_experiment(tag) if […]

-

admin wrote a new post 1 month ago

-

admin wrote a new post 1 month ago

A defense official reveals how AI chatbots could be used for targeting decisionsThe US military might use generative AI systems to rank lists of […]

-

admin wrote a new post 1 month ago

The Download: Early adopters cash in on China’s OpenClaw craze, and US batteries slumpThis is today’s edition of The Download, our weekday n […]

-

admin wrote a new post 1 month ago

Brutal times for the US battery industryJust a few years ago, the battery industry was hot, hot, hot. There was a seemingly infinite number of […]

-

admin wrote a new post 1 month ago

MongoDB Compass: A Beginner-Friendly Guide to MongoDB’s Visual Interface MongoDB is a widely used NoSQL database that stores data in flexible […]

-

admin wrote a new post 1 month ago

How to Use ChatGPT Like a Pro: 10 Workflows That Save You Hours Every WeekDo you also think ChatGPT is useless? If not, you must’ve come across s […]

-

admin wrote a new post 1 month ago

-

admin wrote a new post 1 month ago

-

admin wrote a new post 1 month ago

- Load More

admin

Last active: Active 4 months ago

Comments: 0

Likes: 0

Submitted: 1363

Friends: 0

User Rating: Be the first one!